K-means clustering is one of the most widely used unsupervised learning algorithms in data science. It helps you discover structure in data when you do not have labelled outcomes. Instead of predicting a target, k-means groups similar data points into k distinct clusters based on distance. This makes it useful for tasks like customer segmentation, document grouping, image compression, and anomaly exploration. If you are learning clustering through a data science course or applying it in real projects during a data scientist course in Pune, k-means is often the first algorithm you will implement because it is intuitive, fast, and broadly applicable.

What K-Means Does and Why It Works

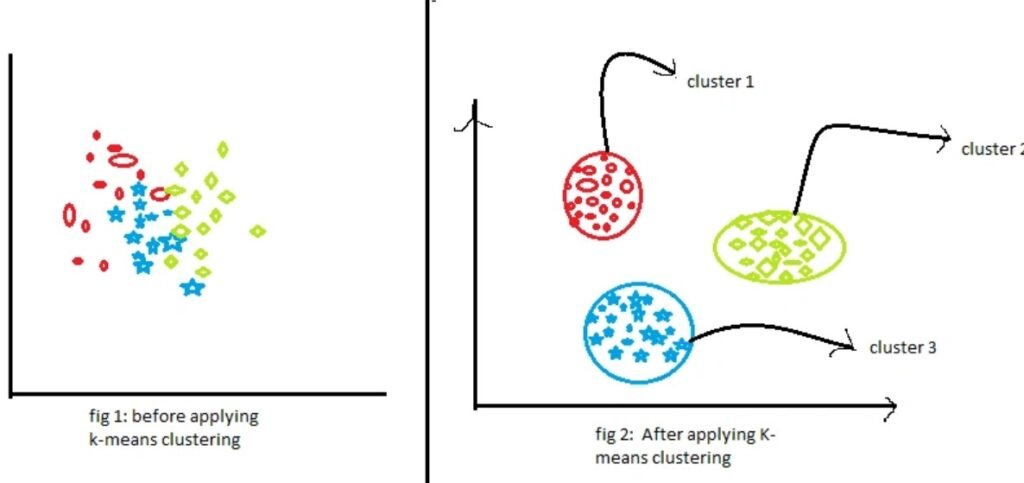

At its core, k-means aims to split a dataset into k groups so that points within a cluster are as similar as possible, while clusters remain as different as possible. Similarity is typically measured using Euclidean distance, though other distance measures can be used in some variants.

The algorithm works by iteratively updating two things:

- Cluster assignments: which cluster each data point belongs to

- Cluster centres (centroids): the “average” position of all points in that cluster

The objective is to minimise the within-cluster sum of squares (WCSS). In simple terms, k-means tries to minimise how far points are from their assigned cluster centre.

Because it optimises a clear objective and uses repeated refinement, it often finds useful groupings even when data is messy. However, it is important to remember that k-means does not “understand” meaning. It simply groups based on distance in feature space.

The K-Means Algorithm Step by Step

A practical way to understand k-means is to walk through the loop it follows:

- Choose k: Decide how many clusters you want.

- Initialise centroids: Pick k starting points (randomly or using a smarter method like k-means++).

- Assign points: Each data point is assigned to the nearest centroid.

- Update centroids: For each cluster, compute the mean of its points and set that as the new centroid.

- Repeat: Continue steps 3 and 4 until assignments stop changing, or improvement becomes negligible.

The strength of k-means is that each iteration is simple and computationally efficient. It scales well to large datasets, which is one reason it remains popular in industry.

How to Choose the Right Value of K

Choosing k is the main design decision. Too few clusters can hide patterns, while too many can create over-segmentation that is hard to interpret.

Common approaches include:

- Elbow method: Plot WCSS against different k values. Look for a point where adding more clusters yields diminishing returns.

- Silhouette score: Measures how similar each point is to its own cluster compared to other clusters. Higher scores generally indicate better separation.

- Domain interpretation: A clustering solution should be explainable. Sometimes the “best” k statistically is not the most useful operationally.

In a business segmentation task, for example, a model that produces five meaningful customer groups might be more actionable than one that produces twelve weakly distinct groups. In a data science course, this is often taught as the difference between mathematical fit and practical value.

Best Practices for Real-World Use

K-means works best when data is prepared carefully. These practices reduce avoidable errors:

- Scale features: Since k-means is distance-based, features with larger ranges dominate. Use standardisation or normalisation.

- Handle outliers: Outliers can pull centroids away from dense regions. Remove, cap, or treat outliers before clustering.

- Use good initialisation: Random initialisation can lead to poor local minima. K-means++ is commonly used to improve starting points.

- Run multiple times: Because results can vary by initialisation, run k-means with multiple random seeds and pick the best objective value.

Many learners first apply k-means directly and then wonder why clusters look strange. In practice, the quality of clustering depends heavily on preprocessing and feature selection. This is a key skill reinforced in a data scientist course in Pune, where projects often involve real datasets with inconsistent scaling and noise.

Limitations You Should Know

Despite its usefulness, k-means is not a universal solution.

- Assumes spherical clusters: It works best when clusters are roughly round and similar in size.

- Sensitive to scaling and feature choice: Poorly engineered features lead to misleading clusters.

- Struggles with non-linear shapes: If clusters form complex patterns, algorithms like DBSCAN or spectral clustering can perform better.

- Needs k in advance: You must decide k before running it, which can be difficult without exploration.

- Not ideal for categorical data: Standard k-means expects numeric features. For categorical data, alternatives like k-modes or mixed-type approaches are better.

Understanding these limitations prevents overuse. Clustering is exploratory by nature, and k-means is one tool among many.

Conclusion

K-means clustering is a foundational unsupervised learning algorithm that partitions data into k groups based on distance to cluster centres. It is easy to implement, scales well, and provides practical insights in areas like segmentation and grouping. However, its results depend strongly on choosing a good k, scaling features, and ensuring the data fits its assumptions. Whether you are learning the basics through a data science course or applying clustering in projects during a data scientist course in Pune, mastering k-means gives you a strong starting point for understanding how unsupervised learning reveals structure in real data.

Business Name: ExcelR – Data Science, Data Analyst Course Training

Address: 1st Floor, East Court Phoenix Market City, F-02, Clover Park, Viman Nagar, Pune, Maharashtra 411014

Phone Number: 096997 53213

Email Id: enquiry@excelr.com